Interrater agreement and interrater reliability: Key concepts, approaches, and applications - ScienceDirect

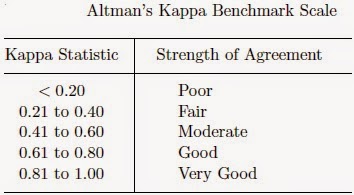

K. Gwet's Inter-Rater Reliability Blog : Benchmarking Agreement CoefficientsInter-rater reliability: Cohen kappa, Gwet AC1/AC2, Krippendorff Alpha

Generalized Cohen's Kappa: A Novel Inter-rater Reliability Metric for Non-mutually Exclusive Categories | SpringerLink

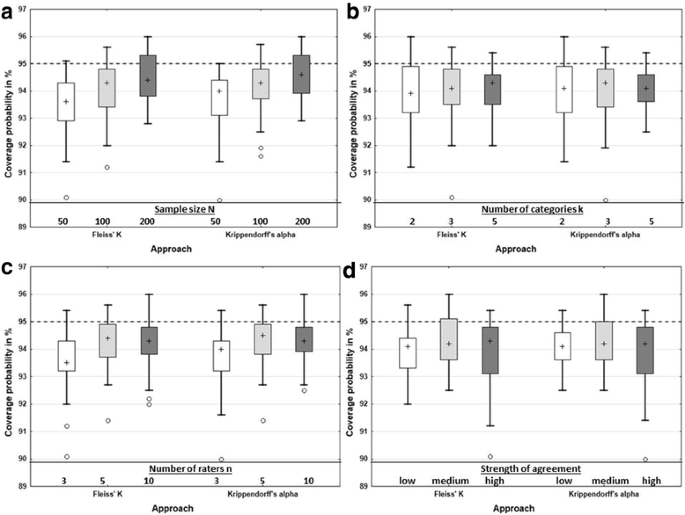

Measuring inter-rater reliability for nominal data – which coefficients and confidence intervals are appropriate? | BMC Medical Research Methodology | Full Text

The Equivalence of Weighted Kappa and the Intraclass Correlation Coefficient as Measures of Reliability - Joseph L. Fleiss, Jacob Cohen, 1973

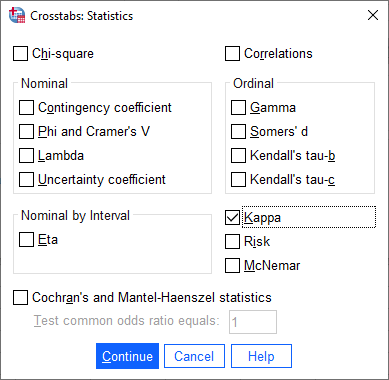

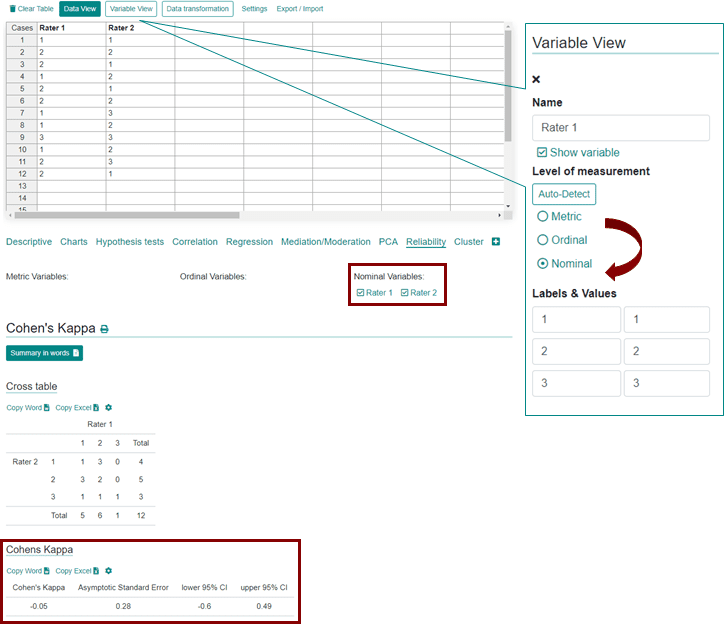

Cohen's kappa in SPSS Statistics - Procedure, output and interpretation of the output using a relevant example | Laerd Statistics

![PDF] Computing Inter-Rater Reliability for Observational Data: An Overview and Tutorial. | Semantic Scholar PDF] Computing Inter-Rater Reliability for Observational Data: An Overview and Tutorial. | Semantic Scholar](https://d3i71xaburhd42.cloudfront.net/e3ee8537cead698052a101cd6c5925d08820f6f2/17-Table4-1.png)

PDF] Computing Inter-Rater Reliability for Observational Data: An Overview and Tutorial. | Semantic Scholar

![PDF] Large sample standard errors of kappa and weighted kappa. | Semantic Scholar PDF] Large sample standard errors of kappa and weighted kappa. | Semantic Scholar](https://d3i71xaburhd42.cloudfront.net/f2c9636d43a08e20f5383dbf3b208bd35a9377b0/2-Table1-1.png)